Device allows paralyzed man to communicate with words

July 27, 2021

Device allows paralyzed man to communicate with words

At a Glance

- Researchers developed a device to decode brain activity into words, allowing a person with paralysis to communicate in complete sentences.

- The device could improve the quality of life for people with severe paralysis who cannot speak by enabling more efficient and natural communication.

Conditions such as stroke and neurodegenerative disease can cause anarthria—the loss of the ability to articulate speech. Anarthria hinders communication and reduces quality of life. Researchers have been working to create brain-computer interfaces that help people with anarthria communicate. With a typical interface, the user must spell out messages one letter at a time. An interface that let the user generate whole words at a time would allow much more efficient and natural communication.

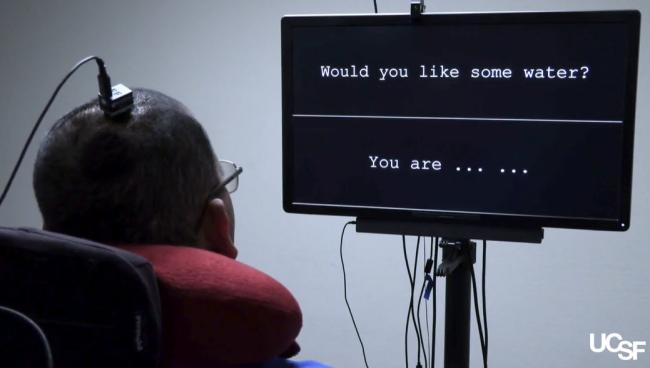

A team of researchers, led by Dr. Edward F. Chang at the University of California, San Francisco, developed a “speech neuroprosthesis.” This device directly decodes brain activity into words and sentences in real time. NIH’s National Institute on Deafness and Other Communication Disorders (NIDCD) is supporting the ongoing work. Results from the first participant appeared in the New England Journal of Medicine on July 15, 2021.

A 36-year-old man, identified as “Bravo-1,” had been severely paralyzed for 16 years due to a brain stem stroke. The researchers implanted an array of electrodes onto the left sensorimotor cortex of Bravo-1’s brain. This part of the brain includes several regions implicated in speech processing.

Once the device was implanted, Bravo-1 and the researchers trained the system over the course of a year and a half. During each training session, Bravo-1 attempted to say each of 50 vocabulary words, and sentences with these words, many times. The electrodes recorded the brain activity associated with each attempt and transmitted it to a computer. Across 48 such sessions, the team recorded 22 hours of data.

The researchers used machine learning to recognize patterns in the brain activity and associate these with what word the user was trying to say. The researchers also applied a model that predicted the next word in a sequence based on English word-use patterns. This provided an “autocorrect” function like that used in texting and speech recognition software.

To test the neuroprosthesis, Bravo-1 attempted to say sentences using the 50-word vocabulary while the device decoded his brain activity in real time. The system decoded about 15 words per minute on average. The researchers noted that speech-decoding approaches become usable when the error rate is below 30%. The error rate for this system was about 25%. Speech-related brain activity patterns were stable throughout the 81-week study period. As a result, the researchers did not need to recalibrate the model to maintain accuracy.

Although the study only involved a single participant and limited vocabulary, the results are a significant advance. “This is an important technological milestone for a person who cannot communicate naturally, and it demonstrates the potential for this approach to give a voice to people with severe paralysis and speech loss,” says co-lead author Dr. David Moses.

The team is working to increase the size of the vocabulary and the rate of speech. They also plan to conduct follow-up trials with more participants.

—by Brian Doctrow, Ph.D.

Related Links

- System Turns Imagined Handwriting into Text

- Scientists Create Speech Using Brain Signals

- Brain Mapping of Language Impairments

- How the Brain Sorts Out Speech Sounds

- Understanding How We Speak

- Device Restores Movement to Paralyzed Limbs

- Voice, Speech, and Language

References

Neuroprosthesis for Decoding Speech in a Paralyzed Person with Anarthria. Moses DA, Metzger SL, Liu JR, Anumanchipalli GK, Makin JG, Sun PF, Chartier J, Dougherty ME, Liu PM, Abrams GM, Tu-Chan A, Ganguly K, Chang EF. N Engl J Med. 2021 Jul 15;385(3):217-227. doi: 10.1056/NEJMoa2027540. PMID: 34260835.

Funding

NIH’s National Institute on Deafness and Other Communication Disorders (NIDCD); Facebook; Joan and Sandy Weill and the Weill Family Foundation; Bill and Susan Oberndorf Foundation; William K. Bowes, Jr. Foundation; Shurl and Kay Curci Foundation.